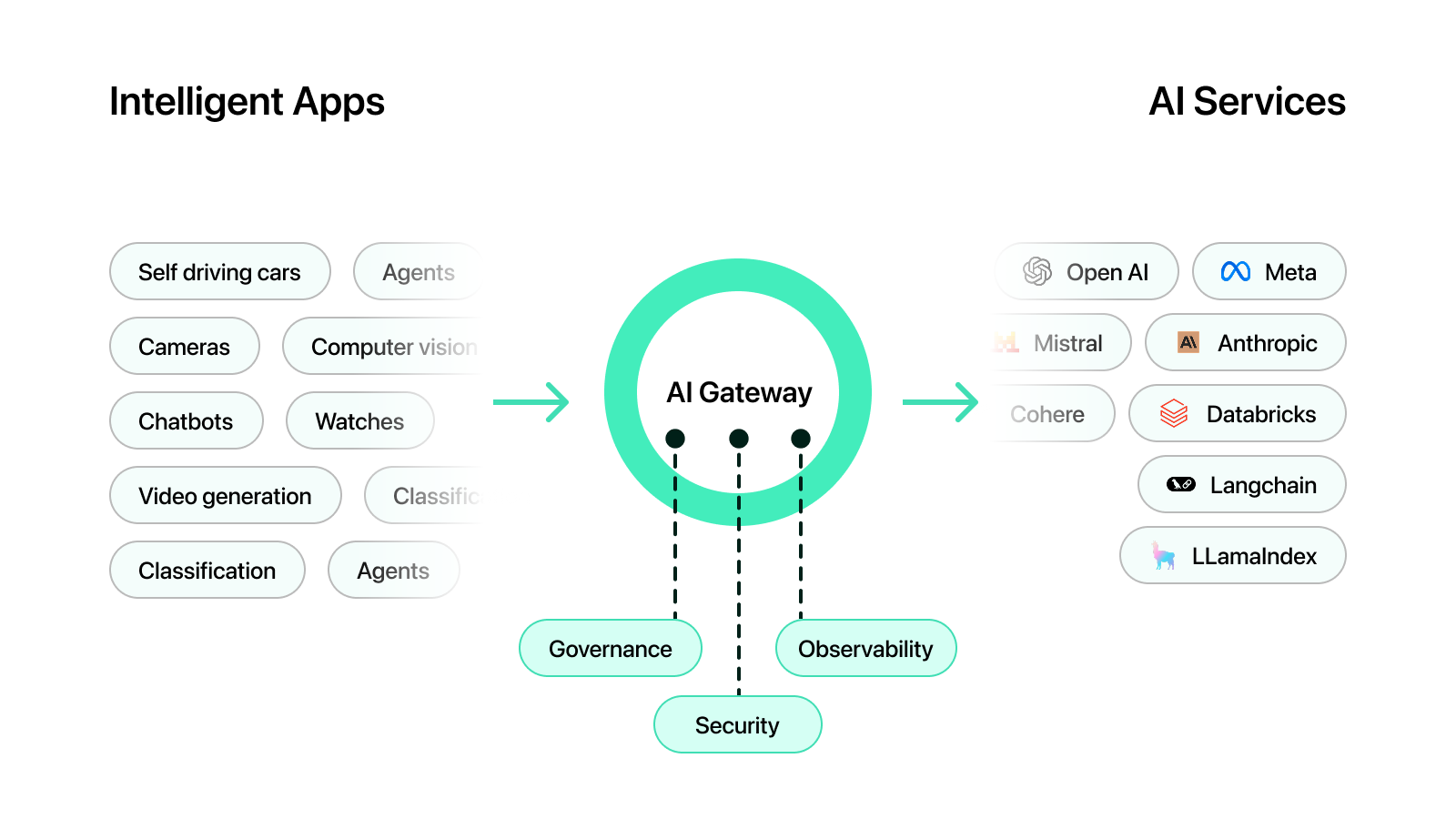

AI gateway

Unified access to all AI models

Standardize how you interact with different LLM providers using one central interface.

Access 50+ Model Providers

Define and manage multiple LLM endpoints across providers in a single place, enabling centralized API key management and seamless integration.

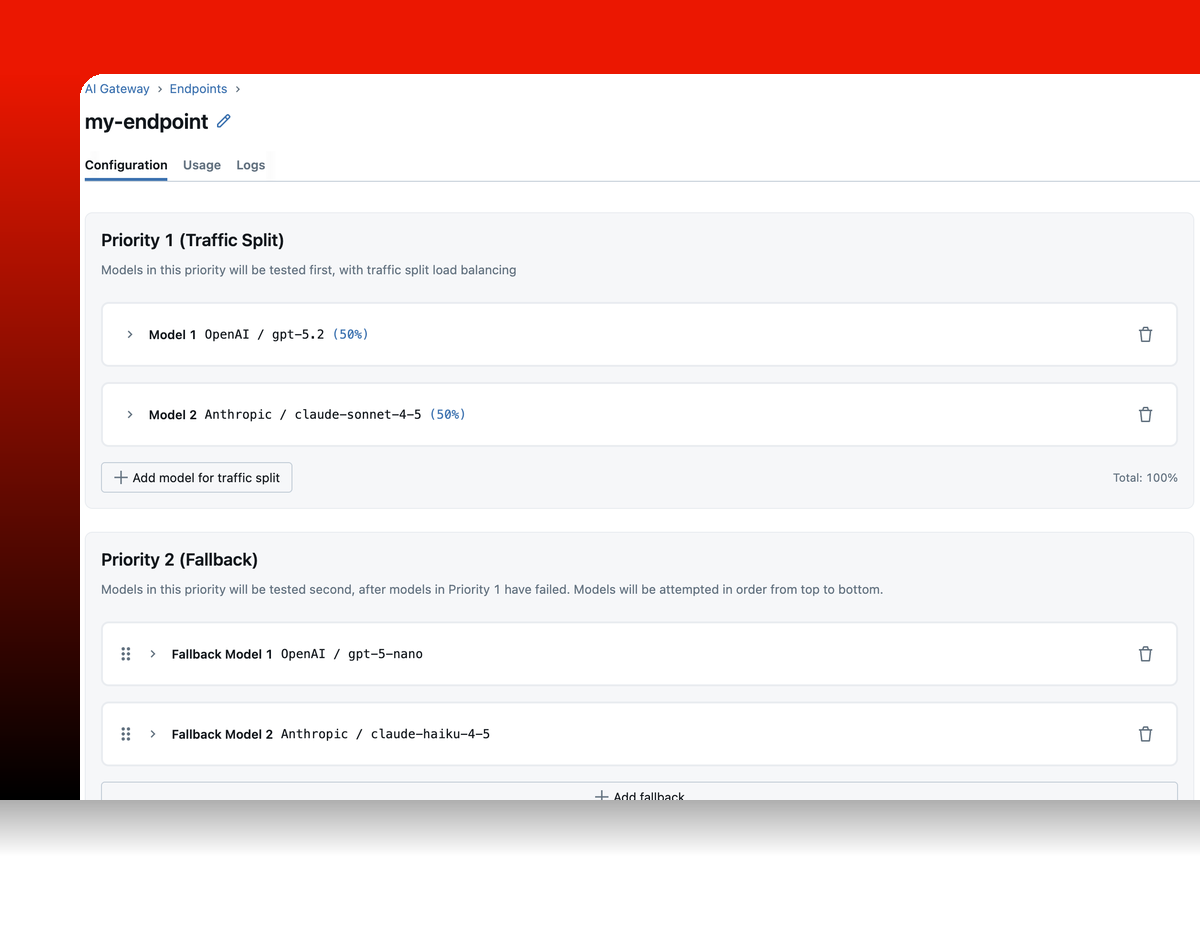

Traffic routing and fallbacks

Split traffic across multiple models for A/B testing and gradual rollouts. Define fallback chains so requests automatically reroute to a backup provider when the primary is unavailable.

Usage tracking

Every request is recorded as an MLflow trace. Visualize request volume, latency percentiles, token consumption, and cost breakdowns across all endpoints from a unified dashboard.

Supported providers

Route requests to any major LLM provider through a single, unified interface. The gateway handles credentials, usage tracking, and failover so your application code stays provider-agnostic.

Get Started in 3 Simple Steps

Set up governed LLM access in minutes. No additional infrastructure required.Get Started →

1

Start MLflow Server

Launch the tracking server. The AI Gateway is included out of the box.

bash

~30 seconds

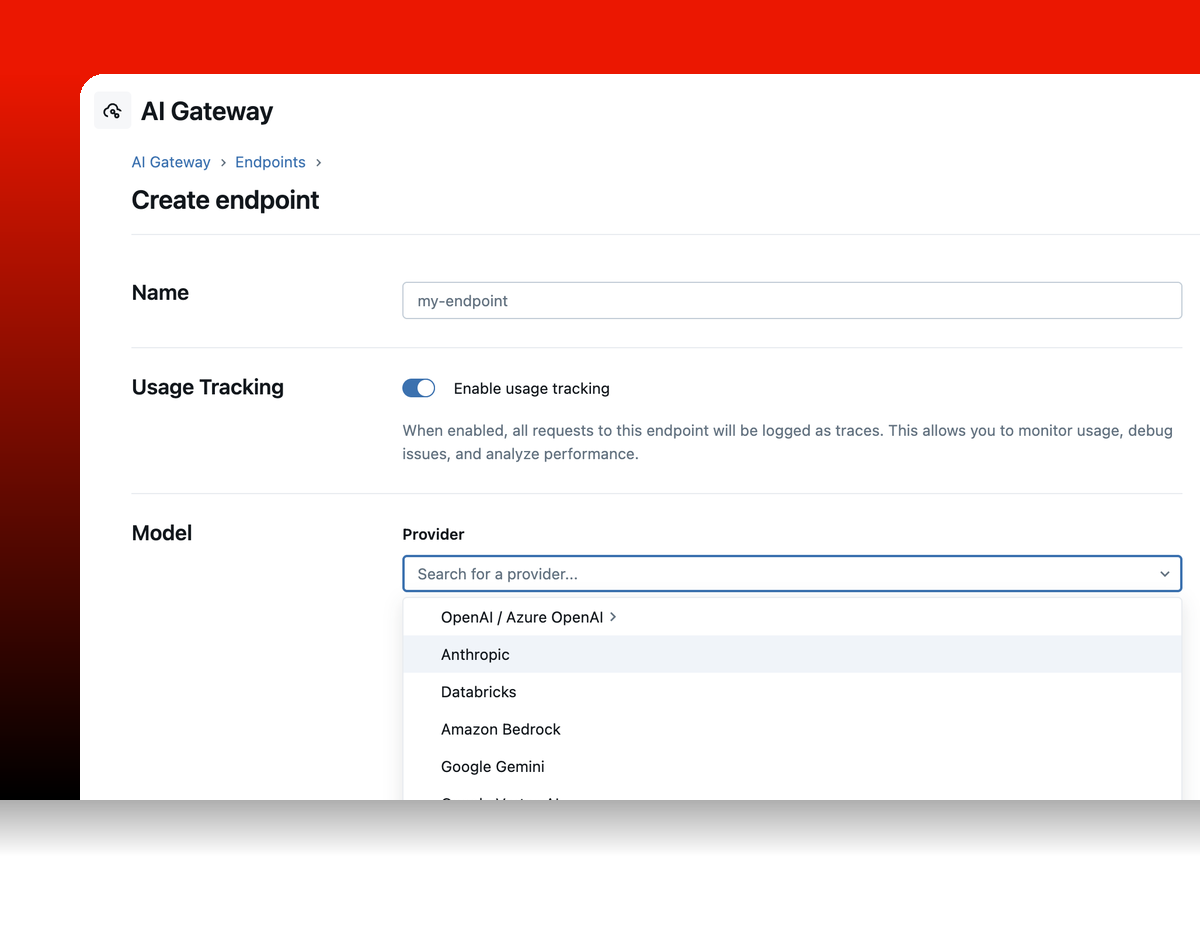

2

Create an Endpoint

Add API keys and configure endpoints from the UI, no server restart needed.

bash

~1 minute

3

Query Through the Gateway

Use any OpenAI-compatible SDK. Point the base URL at the gateway and use your endpoint name as the model.

python

~30 seconds

Get started with MLflow

Choose from two options depending on your needs

GET INVOLVED

Connect with the open source community

Join millions of MLflow users